Google representatives confirm the impact of clicks on search results, but not necessarily in the way you expect. The hosts also discuss what Google’s Core Updates affect in search results, changes to the use of fact check structured markup, and much more.

Noteworthy links from this episode:

- Clicks influence the features that get shown in the search results

- Google no longer allows multiple instances of fact check markup per page

- Google drops safe browsing as a page experience ranking signal

- Google Core Updates Can Sometimes Impact Image Search & Local Search

Transcription of Episode 412

Ross: Hello, and welcome to SEO 101 on WMR.fm episode number 412. This is Ross Dunn, CEO of Stepforth Web Marketing and my co-host is my company’s senior SEO Scott Van Achte.

Well bud, we’re halfway through the summer now, how are you doing?

Scott: I’m doing great. It’s been a good summer and hopefully the second half of the summer is just as good as the first.

Ross: Yes, I’m enjoying it so far. I know we’re getting some rain soon so that will be good for everything. It’s dry as a bone out here and we’re all worried about fire. Hopefully any listeners who are in the fire area also get some water, or anyone deluged by smoke. Getting tired of this every year.

Anyway, let’s jump right into this. We’re gonna start with some other news that you posted, so fire away.

Scott: Yeah. So some people may wonder, when you do a Google search for whatever and you see some images or video results, you wonder what’s going on there. Why does Google show images for some searches and not for others?

This was interesting when I saw this, it was actually part of a Search Engine Land post that went into other topics like Google universal search, so it’s kind of a subsection of that article. I found it interesting because it’s just a bit more insight into what Google is doing. Even if it’s not a surprise, it’s interesting anyway and it shows that this click through rate is essentially impacting search results. Even though Google says that click through rate on organic search has no impact on rankings, it shows that they use it for this now, for images and video and what data they show in the SERPs, search engine result pages. We know that they use it from the paid perspective. I feel like if they’re not using it for organic, which they probably are even though they say they’re not, it’s probably a matter of time before they do. So it gives us a bit more idea that perhaps, things like meta descriptions and things that don’t have a direct impact on search results may end up having that much bigger of an impact due to things like click through rate, and more of an indirect help there. So it’s just a speculation.

Ross: I don’t know when that’ll happen but either way, we know that meta descriptions certainly help click throughs whether or not those click throughs have any impact on whether or not you rank is a whole ‘nother thing that’s all up in the air, Google claims it doesn’t and such. It’s quite possible they’re telling the truth, maybe that the noise from such a thing is just too, too great. There’s too many people trying to manipulate that. Anything that can be easily gamed is a difficult thing for them to clean up and utilize, so that could be an issue but it’s kind of interesting data too.

Scott: That might be a big part of why, assuming they don’t use that click through data, it isn’t being used is because if you’ve got a big enough budget and you can get these farms of people that go through and do a search for your query and click on your site, you could use that to your advantage to gain results. Although I would imagine Google is smart enough to be able to weed a large percentage of that out but it’s something you don’t have to worry about if they don’t use that metric.

Ross: To encapsulate all this, what really comes to this, what we do know, is that the reason we see videos or the reason we see images, like those… What do you call them? The slideshares you see?

Scott: The carousel?

Ross: Carousel! Thank you! is because someone has clicked on images and they want to see the information. It was like, “well, looks like there’s enough interest to show images on this query so now we’re gonna start showing that carousel.” Then they’ll, I’m sure, just see whether or not it increases the user experience because I’m sure everything’s tested. This data is highly useful for them because they want to make sure that everyone who goes to their website stays on there and uses more and more and more of it. All very logical and it’s nice to have a little peek into how things work. Cool.

Next, okay, so the next bit here is SEO news. Google no longer allows multiple instances of fact check markup per page. This was interesting because I had no idea this existed.

Scott: I feel bad. I didn’t either.

Ross: Yeah, there is so much structured data out there and this is a very focused, unique structured data use. I went through it to understand it a little bit better but first, let’s quote here, and I guess this is from Barry?

Scott: You know what, I believe it was, I can check for you.

Ross: Okay. This quote here, I assume, is Barry talking “to be eligible for the single fact check rich result, a page must only have one ClaimReview element. If you add multiple ClaimReview elements per page, the page won’t be eligible for the single factor check rich result.” Now, what the hell does that mean? When it comes down to it, ClaimReview is used to, say, let’s say you have an article and you’re talking about, an example he uses is, flat earthers. They’re talking about, “if you get to the end of the world, you’ll fall off” ClaimReview would state that this is what they said, this is who said it, and we fact-checked this and concluded it’s false. Here’s the information about what we did. Essentially, it’s a chance for you to show up underneath a statement that needs to be fact checked within Google search results and then you will show up as one of the fact checkers. Obviously, based on anything we know about Google, you won’t show up for this unless you are highly authoritative and someone they’ve just determined they can trust. There’s quite a list of considerations and whether or not you’re going to be appearing or not, including following Google’s guidelines strictly. There’s a whole bunch of information and there’ll be a link to it in the show notes, but on the actual structure data. To quote them, this is when it’s used, “If you have a web page that refuse a claim made by others, you can include ClaimReview structured data on your web page, ClaimReview structured data can enable a summarized version of your fact check to display in Google search results when your page appears in search results for that claim.”

Anyway, who knew that you could have multiple instances? So if you do, you can’t do that anymore. You just need to build one and hopefully you’ll show up for it, but only if you’ve built quite an authority with Google.

Scott: Realistically, it kind of makes sense that you should only have one on a page and have a page for each claim you’re trying to review. It just seems like good SEO and content management for your site to break things up into multiple pages in most cases, anyways.

Ross: Yeah, geez. Who would try to get more and more out of a single page?

Scott: I don’t know.

Ross: Black hats?

Scott: I love it when little bits of news like this comes out because we haven’t, to the best of my knowledge, ever had a client who did fact checking and this sort of stuff. There’s so much out there that doesn’t cross our desks until it does. It hasn’t really crossed our desk. Now if we do get that from one of our clients, we’ll know exactly what’s going on.

Ross: Yeah, and it probably will change already by that point.

Scott: We’ll have to read, we have to look it up anyway.

Ross: Yeah, exactly. At least we know it’s there. We’re not just going “huh?” Anyway, that doesn’t happen very often, thankfully. All right. What is this about Safe Browsing?

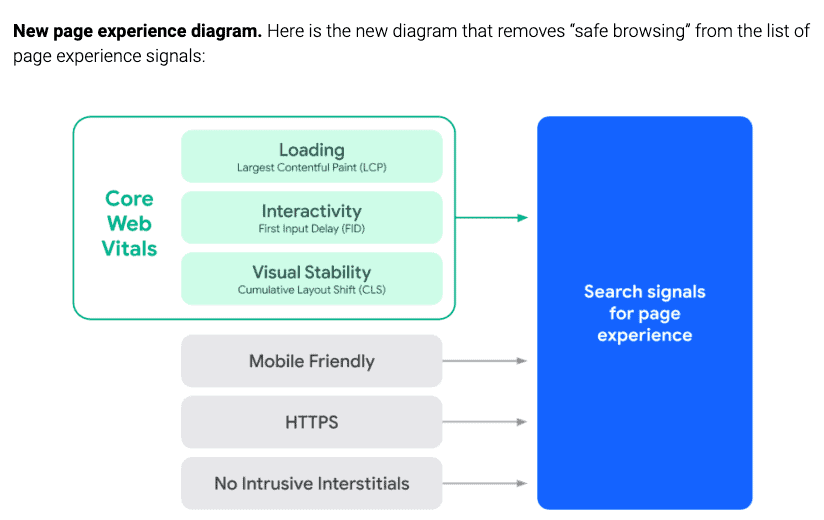

Scott: Yeah. So as anyone that listens to this show knows, that Google page experience update is still rolling out. It started in June and will continue for, probably, a few more weeks. One of the signals as part of that update involves Safe Browsing and Google has decided that that is no longer a signal in the page experience update.

Ross: Well, yeah. You still have to have access to your Google Search Console to even know if you’ve been hacked and you weren’t aware of it. We find it all the time, we get new websites and we put our own scanner on it to double check. We have a service where we’re doing that every month. We implement this plugin and it goes through the site, looks for any issues and then locks it down so it’s not easily hacked again, or hacked at all. Oftentimes it finds hacks, and the client has no idea and the fact is, they really wouldn’t, because most of the hacks appear as individual pages that have been added to the site and start ranking. One good way to look at it to find out if you do is do a site: search, so site:your domain, and so site:stepforth.com. and then you’ll see all the pages that are a fair number of the pages that Google has indexed, and start looking through there and see what kind of stuff appears. That’s a very time intensive way. Another one is to use a tool like SEMrush and yes, you do need an account but if you use that, and just check your website to see what it’s ranking for, if you start seeing some really weird, very often rude rankings appearing, that’s a problem. There’s probably some pages on your site that have been completely rewritten or completely added, or they’re brand new, but they’re ranking. You can find out quickly that there’s been a hack and that there’s a backdoor that’s been added. So just cleaning the hack won’t fix it, you need to find the backdoor that was used, or first of all, how other people got in there and then look for the backdoor that they left there to get back in again once you’ve locked it, because they do that as well. It’s not easy. Plugins will help with that, though and it is important to do. So it is good that Google tells you about this, if they see an issue like that, they will let you know. The only way you’ll know is Google Search Console, if they find it.

Scott: Even that is interesting because depending on how sophisticated the hackers are, I mean, if you could run a spider on your website and not pick up these issues because they’ll link to it from third party sites. So a crawl, they may be orphaned on your site. If they’ve done a good enough job with the spam content, Google may not even pick it up. They’re just gonna think it’s a low quality page on your site. So you really want a full attack plan: spider your site with a crawler, check Google Search Console, if you have access to plugins or if you’re one of our clients, we have other plugins that help look for this stuff. You really need to be proactive because if you get hacked, I mean, we’ve had clients in the past come to us and wonder what’s going on. We do an audit and sometimes it’s not obvious that they’ve been hacked and then you find it like, “Oh, look at this. This is bad.” These hacks can be super damaging over time.

Ross: Yeah, and they’re obviously not so good for your authority so you want to get them cleaned up quickly.

Scott: And make sure everything’s updated. We had one client, she was getting hacked regularly. She was running an old proprietary content management system and she just kept getting hacked and we kept saying, “you need to update your website, you need to update” I think she was running an old version of JavaScript as well. It was just bad and she just refused to make any changes and we just kept fixing it every time she got hacked and it was all we could do.

Ross: But that starts to add up financially, you know, we have to charge for that time.

Scott: Website redesign or content management switch should be way cheaper in the long run.

Ross: Yeah, nevermind the impact on her business.

Scott: Oh, it was huge.

Ross: Nothing to joke about, that is for sure. Although we always try to find a way.

Scott: I don’t think we joked about it too much today.

Ross: No, not with her. No.

Scott: Oh, not with the client. Never with the client. No.

Ross: Look at us dancing around here. Well, let’s take a quick break and when we come back, we got a little more news to share. It’s a bit of a light news week, but we have some more. So be right back.

Welcome back to SEO 101 on WMR.fm. Hosted by myself, Ross Dunn, CEO of Stepforth Web Marketing and my company’s senior SEO Scott Van Achte.

Right. So watch out for those hacks. No good. Probably came across.

Now, Google core updates can sometimes impact image search and local search.

Scott: I just love ‘it depends’. It’s like the SEO version of a drinking game. Every time someone says ‘it depends’, you take a shot. You go to any conference, you read any article, you talk to any client, it’s ‘it depends’ and it’s true. There’s no other way to say it. It’s so true.

Ross: It is. Yeah. All right. So Mueller files here. Google: website downtime shouldn’t impact search rankings. Oh, yeah. Fire away on this one.

Scott: Yeah, it shouldn’t. So a website owner posted to Reddit recently, and it’s funny I’m seeing Reddit around a lot more, I feel like they’ve seen a big spike maybe due to COVID. I don’t know. They were always big, but seem bigger now. Anyways, a website owner posted to Reddit asking if lost Google rankings could be recovered after almost five days of downtime. They noted a technical issue took the website offline resulting in a loss of 10,000 visitors a day. Ouch. So John advised that the downtime should not create a permanent ranking drop. Noting that, “that should pop back in a week or two. If it takes longer than that, the drop wouldn’t be from the downtime.” He went on to elaborate that, “this is essentially just a technical issue.

If the URL returns an HTTP header status of 5xx, so that’s like server errors and that sort of thing, or the site is unreachable, they’ll retry over the next few days and nothing will happen. No ranking drops or indexing until, I wrote here, a few days have passed. If the URL returns an HTTP 4xx, so that would be a 404 Not Found Error, then they will start dropping those URLs from their index. So this is good to know because often, that happens to our clients, happens to all of us. Your website crashes for maybe a day or two due to a hosting issue, or maybe you’ve been hacked, and it’s all wiped out or something. If that does happen, as long as you get it back up and running in a reasonable amount of time, you will not see any ranking issues as a result of that, and if you do, they’ll be very short-lived, within a few days. Rightfully so, if your site’s serving a 500 error, or a Not Found Error, it shouldn’t be in results. Google doesn’t want to send people to a site that is not working. What this also tells me, is that if your site is down and you know it’s going to be down for any length of time, make sure it’s serving a 5xx error and not a 4xx or Not Found because the Not Found is more likely to have a quicker negative effect in search results. Like John had said, they’ll start dropping those URLs. Yes, you’ll most likely get it back. Long term, everything should be fine but if it’s serving a 5xx, your downtime and loss rankings will be reduced. So make sure you’re serving the appropriate errors when things go bad.

Ross: However you manage to do that, is another question entirely.

Scott: That was a mouthful

Ross: Yeah. I think that it is a bit of encouragement when sites are being transitioned or updates are being made to websites, we’ve often seen them go down on live. Not the ones we’re working on because when we do those changes, we do it in a sandbox, in a place where we can test them before they’re going live but we’re not always the one making the changes and we end up dealing with clients who’ve used an outside developer and they weren’t quite as careful. It’s good to know, though, that if there is a ranking drop or whatever, that it’s not going to be long term. Obviously, that doesn’t make up for the fact that potential clients, customers may have gone to the website and seen nothing or seen something broken, which is certainly not an indicator of quality, reliability, etc. That’s not gonna be good for business, but at least part of it is a little better than it might have been. Alright, so we were jumping right into some thoughts that I agree Scott should share before we end the show.

Scott: Yeah, so this kind of goes, if you are a website owner and you hire an SEO to do work for you, I’d like you to keep this in mind because it can really impact the effectiveness of your campaign. As an example, we have one client who’s a great guy, does really well, we’ve been quite successful with him but early on, we kind of had some issues where he would make changes to the website without letting anybody know. The rankings would drop or have negative effects and then we’re left scrambling and he’s like, “Well, why did my ranking for such and such a term drop?” And I’m like, “I don’t know, there’s no reason for it to have dropped.” So then we start digging, only to find that he’s made some critical changes to things that shouldn’t have been made at all or should have been made in a different manner. The reason I bring it up is, you know, he made some changes or he wants to make some changes and he contacted me a couple days ago and ran it past me first, which is exactly what you should be doing. I wanted to bring that up and say, if you’re running an SEO campaign and you’re planning any changes for your website, it could be as simple as changing a paragraph of text on a core page, or something more significant like a URL structure change, or major content changes, or design changes. At the very least, tell your SEO that you’ve done it, but preferably, run them past your SEO first and be like, “I want to make these changes. What do you think?” and get their opinion. As an SEO, there’s nothing worse than, you know, maybe you start off and you’re just checking rankings or traffic for a client and you see things have crashed and then you’re trying to troubleshoot why. Only to find it’s actually the client who made a critical error without running it past you and it totally could have been avoided. It happens far more often than I’d like to admit. Not that it’s any fault of ours but I care about my clients. I’m sure most SEOs do and it hurts when you see these big issues pop up, especially when they’re fully avoidable.

Ross: Yeah, and we’ve done a lot of work to get the clients where they are and they have too. We don’t want to see anything like this completely sabotage our work and theirs. One of our longest standing client, they are no longer with us because in an awesome twist of events, they outgrew us. They got so big but I started with them from the beginning, when they were only a few years old and a small business and we were with them 21 years, we did their marketing. Over that time, no matter how many times we told them, they would just launch websites without telling us.

Scott: That’s the worst.

Ross: Rewriting everything and we’d scramble because they were a very important client. Again, no matter what we said, they would do it. It was incredibly frustrating. Thankfully, we were able to rescue them every time but it’s not something you ever want to put on top of your SEO, and you don’t want to risk yourself.

Scott: I know the exact client you’re talking about. Yeah, and I think during my time with Stepforth, which has been a long time now. Oh man, I think they launched, probably, a half dozen redesigns over the years, as a surprise like “surprise!” and they weren’t small redesigns. It was full on, the architecture of the website, all the URLs, the content was like a full blown massive redesign and redevelopment with no word that it was coming down the pipes until it was done. They’d always have all these 404 errors and the rankings would crash short term because luckily, even back then, they always had huge authority because we had done a good job with them and what have you, not to toot our own horns, but we had, and we were always able to get them back. Oh man, that was always you know, you come back from vacation, “why are my rankings crapped out?” “I don’t know. Let’s look at your site. Oh, well, that’s why”

Ross: “Whose website is this?”

Scott: I know. You totally shifted. You didn’t used to sell these things before, I don’t know. Oh, good times.

Ross: Yes. I knew you’d feel the pain.

Scott: You know what, that’s the worst.

Ross: It was usually on a Friday, right? Just before your weekend

Scott: It is! It’s like, “Okay, I’m gonna go for the weekend. I’ve got a big party tonight. Oh, wait, what? Okay.”

Ross: “What happened to our rankings?” Getting angry at you.

Scott: Yeah, they’ll give you a call in panic mode, great. Just listen to your SEO, share, and communicate. We’re a team, the SEO and the client is a team and if they act like a team, the goal campaigns will be way more successful.

Ross: Yeah, it’s not all sweet-smelling roses being an SEO?

Scott: Sometimes.

Ross: Sometimes. Yes, indeed.

Well, on behalf of myself, Ross Dunn, CEO of Stepforth Web Marketing and my company’s senior SEO Scott Van Achte, thank you for joining us today.

Scott: Thanks for listening, everyone.

Mueller Files: